Discover the Power of Graph Databases

You need to be signed in to add a collection

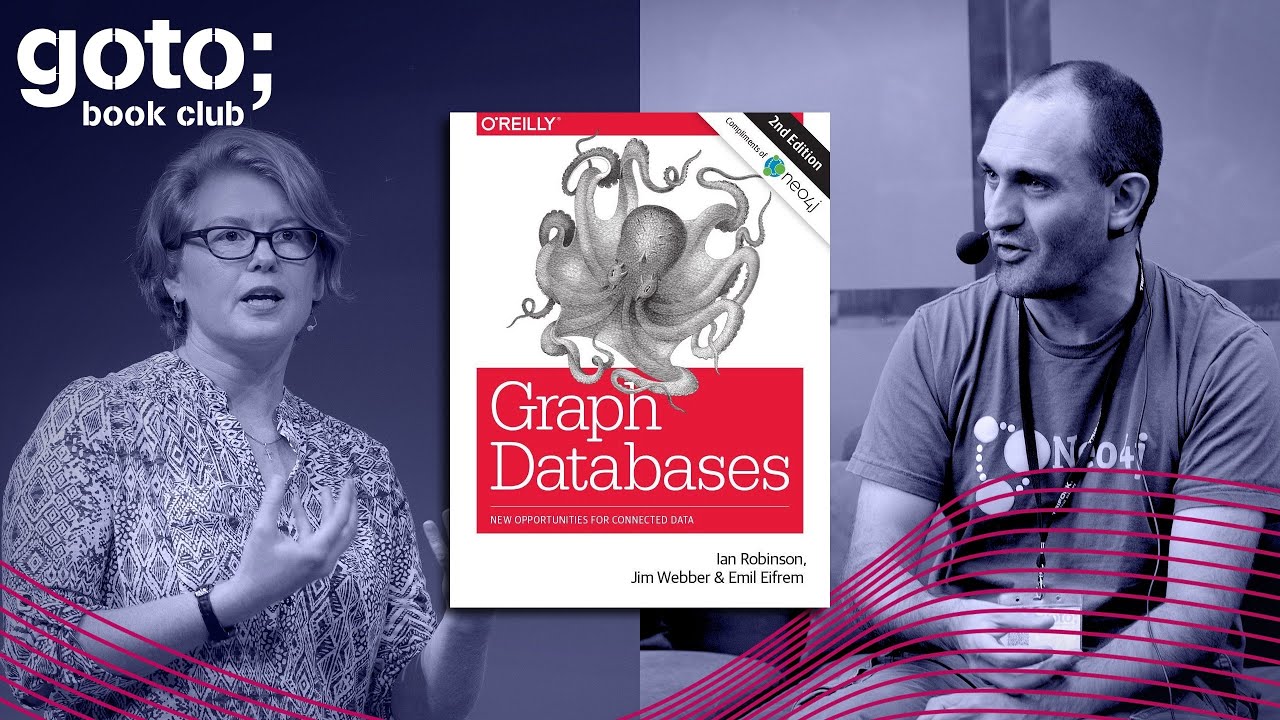

Discover the amazing world of Graph Databases and how you can leverage graphs to understand your data. Jim Webber, the co-author of GraphDatabase and Nicki Watt, CTO at OpenCredo, take you on a journey that starts with the definition of graphs, walks you through case studies and highlights pitfalls.

Transcript

Listen to this episode on:

Apple Podcasts | Google Podcasts | Spotify | Overcast | Pocket Casts

Discover the amazing world of graph databases and how you can leverage graphs to understand your data. Jim Webber, the co-author of Graph Databases and Nicki Watt, CTO at OpenCredo, take you on a journey that starts with the definition of graphs, walks you through case studies, and highlights pitfalls.

This is an excerpt from the Book Club interview with Jim Webber and Nicki Watt. Full episode coming up soon.

Preben Thoro: Welcome to the two of you. Thanks a lot for joining us here.

Jim Webber: Sure. Thanks for organizing.

Nicki Watt: Thanks. Looking very forward to the chat.

Nicki Watt: Welcome, Jim. Good to see you again.

Jim Webber: Hello, Nicki. It's lovely to chat with you. It's been a while.

What is a graph database?

Nicki Watt: It has been a while, indeed. And we're talking about your wonderful book, "Graph Databases."

Jim Webber: Yes, I was thinking about this before our chat and actually the first version of this book I wrote with Ian Robinson in the kitchen of your old office about 9 or 10 years ago. So we've got some history here, right? I mean, thankfully we talk about the updated version or the second edition this time around, but it's a lovely thing that we have that shared history that we used to clog up your tea room with our writing and rambling.

Nicki Watt: Yes, we enjoyed it immensely because we got to actually ask you all the difficult questions as well. So it was really good.

Jim Webber: Fun times.

Nicki Watt: Excellent. Well, let's kick-off, and let's start with something simple. What is a graph and what is a graph database?

Jim Webber: Yes. So it turns out that that's still a common question you know, but in short, a graph is a data model. It comes from maths but you don't have to be worried about it. You know you don't have to get all mathematical about it. But most software people find it very straightforward. A graph is a model where we have nodes connected via relationships to other nodes. And because we're software folks, it's pretty simple to understand. We've got circles that represent typically entities, and we have relationships between those circles. If you can draw a circle and an arrow and another circle, you already understand graphs.

For example, the kind of graphs that everyone is familiar with, things like social graphs: I could say that I have a node that represents me as Jim, and I can have an arrow representing follows. I could label that arrow as "follows," and have a node representing you, Nicki. And then the graph would be Jim follows Nicki. So actually it's a really simple model. You're not constrained by tables or kind of insane normal forms and joins and all that stuff. It's very much more like an object or domain model as we think about it.

If we take those simple idioms of nodes and relationships, then we can build really big, sophisticated high-fidelity models. And then if you're into it, there's also a bunch of maths and ML and other stuff you can apply to it. Or if you're just like a normal human software developer, then you can just build really big high-fidelity models and ask questions of it and get answers. So I could ask, for example, "Who do I follow?" or, indeed, just as cheaply, "Who follows me?" which is kind of an expensive thing to ask, for example, in relational technology.

Key takeaway no. 1

Graph databases are great options for storing and querying highly connected data including offering high query performance.

Graph Use Cases

Nicki Watt: Great, great. So you've explained that graph databases are really good for highly specialized connected data use cases, and using the social network as an example is great. But not everybody's building social networks out there. That's always the classic example I think people like to use. But practically, what are the other kinds of common use cases that people would actually use graphs for that are not in the standard social network sort of land?

Jim Webber: Yes. It turns out that when I was clogging up your tea room back in the day, we always used to say social because it was the thing that people understood. But it turns out that graphs are reasonably horizontal, right, in the same way, that relational is reasonably horizontal or probably documents are reasonably horizontal. What we found over the years is that graph models are natural for quite a broad array of use cases. Actually, my first graph use case was retail recommendations, so looking at your purchase history and looking at what would complement your purchase history and then recommending it to you. That's what got me into graphs. That's what pulled me into this whole domain.

But equally, I remember some of your team working on oil and gas pipeline optimizations. Of course, it's a graph problem when you think about it for a moment because the pipelines are a network. And then of course you say, "Oh, network? Well, then it's IT, it's ops, it's telecoms." But then actually our lives are very connected. You know, your healthcare history is a graph. It's a graph of interventions and clinicians and hospitals and prescriptions and all that kind of stuff going on. And then so it's things like building materials, it's a complex graph of all the widgets I need to create an airplane or a laptop or a car.

What we're finding now is that you know, although it's very easy for us to talk about graphs in the familiar sense, you know, Facebook, Twitter, that kind of thing, that a lot of the times problems that we would solve creatively with relational databases are very humanely solved with graphs. Again, it just comes back to that circle, stick, circle, idiom. And any idiot can do that. And I know because I am one of those idiots. So if I can do it, anyone else can feel profoundly powerful with graph tech.

Nicki Watt: That's great. Could you maybe talk about what graphs are not good for? I think we're probably quite biased in terms of really, we think graphs are good for everything. But there are cases where they're not a good fit. So could you speak about what those are?

Jim Webber: Yes, absolutely. So I think if you've got like just a bulk data storage problem, you want to store your web server logs, you want to build a counter for votes on "Britain's Got Talent," you know, something like that, there are better databases out there, right, for doing that kind of undifferentiated bulk storage. You know, everyone knows them, you want to build a counter for "Britain's Got Talent" votes, use Cassandra. It's great for that. You know you want to continue to run your legacy payroll system? Well, you've already got Oracle. Carry on doing Oracle because it's square, uniform data. It's not going to cause you any bother.

But what we're observing is that when you want to do the kind of higher value stuff where I don't just want to analyze the kind of aggregate votes for an act on "Britain's Got Talent" but I want to understand the social demographics in the way that people are voting, which of course in the real world does manifest itself, right? We have an important election coming up in the U.S., for example, so understanding sentiment, that's when you get back into graphs because graphs are a really useful analytical tool for that kind of stuff, which is more important or more valuable, I think than just the aggregate numbers.

So it really is like if you've got something that's a bulk storage problem, you know, kind of web server logs, all that stuff, go find your data leg, dig out your Hadoop, deploy your Cassandra. These are wonderful pieces of kit. But when you want to bring the analytical stuff to bear, then a graph would still be a good fit for that stuff. And in fact, what we often see because graphs sadly don't rule the whole world yet, we see these things playing together, right? So you'll see the, for example, if I've got like, you know, a petabyte of storage about, you know, NASA mission data or something, there's not a lot of value in having that all graphed. But there is a lot of value in having a graph window on top of that to kind of bring the kind of analytical techniques that graphs provide onto a window of that data that's of particular interest. So we see those things playing together nicely all the time now, which is very pleasing.

Key takeaway no.2

Useful across multiple domains where data is highly connected — but beware of cases where this is not the case, or where for example you need to do bulk queries with unknown starting points. Graphs are good for highly connected data modeling scenarios but not for disconnected data or undifferentiated bulk storage.

Graphs in action: FinCen use case

Nicki Watt: Right. Great. Excellent. So I think, interestingly enough, one of the things I was recently looking at was actually the FinCEN file revelations that came out. This is where the ICIJ, which is the International Consortium of International Journalists actually used graph technology to reveal the role of global banks in industrial money laundering, which is a fantastic way of seeing who's doing what where or who's doing the wrong things where. So could you maybe speak a little bit about that and explain how graphs helped to actually blow the lid on this whole story?

Jim Webber: So the ICIJ are pretty busy people, right? So they've done a few things like this. I guess most famously Panama Papers with that huge data leak from South America, which by the way, caught the prime minister of Iceland. Also caught former British Prime Minister David Cameron's father having completely, I'm sure, above-board offshore accounts for perfectly good reasons like he lives in an oppressive regime, something like that.

The value of graphs here is that they're a really good structure for understanding flows of money. So the ICIJ, when they did the FinCEN stuff, so this is the United States Financial Crimes Enforcement Network, that's the acronym, and the idea is that any financial institution when it has a suspected dodgy transaction, it has to report it. "Hey, I think this is iffy in some way."

Discover how graph databases can help you manage and query highly connected data.This second edition includes new code samples and diagrams, using the latest Neo4j syntax, as well as information on new functionality. Learn how different organizations are using graph databases to outperform their competitors. With this book’s data modeling, query, and code examples, you’ll quickly be able to implement your own solution.

So what happened was about somewhere on the order of magnitude of 3,000 files were dumped of the what's called SAR files, the Suspicious Activity Report files, representing around $2 trillion worth, with a T, of suspicious money movements. It turns out that many banks are either victims or unwittingly allowing suspicious money to flow through their networks. That's really hard to see. You've worked in banking. I've worked in banking. The systems there are huge, they're complicated, they're voluminous and they're interconnected in a myriad of different ways. Compliance is a nightmare, right, because you just can't see the wood for the trees.

So what the ICIJ did, they got these documents, they said, "Well, look, this is a terribly difficult problem. Let's have a look at how each of these documents, how each of these suspicious activities relates to payee and payments and payee and that kind of thing. When you connect these things together, circle, arrow, circle, you see it starts to form a graph. That graph represents flows of money from institutions through the banks through to other institutions and then using that, it's actually very straightforward to start asking questions about why is this particular flow flowing through this major U.S. bank from dodgy company to dodgy company with seemingly no useful economic activity?

And those are the kind of questions that a graph database can easily answer because you're just pattern matching. You're drawing pictures, at least in the Neo4j using the Cypher query language, you're drawing pictures of patterns that you're interested in and there are patterns unlearned by domain experts or even can be learned by algorithms nowadays. Then you say, "Hey, database, find me these kinds of suspicious patterns in this graph, in this network, and tell me about them."

The ICIJ is pretty good at this now. They've done it for several large newsworthy items where they pour their data into a graph and they understand the flows of money in this case, or they've understood the hierarchical and opaque ownership of trusts and offshore accounts in other cases, and they're able to shine light on that by letting the database do the heavy lifting. We understand that a single human couldn't hold all that complexity in their minds, but by just asking the database, "Hey, do the heavy lifting. Find me the suspicious stuff and show me who it's coming from, who it's flowing through because they're probably in trouble, and where it's going to and then we can get a lot of value from that."

And by the way, you know, sitting here as we are in London, I will point out that 3,000 British companies appear in these files and it turns out that's more than any other country. So I guess we won something, right? We are the champions. Yay.

So yeah, hopefully now, what I really hope, all joking aside, is, you know, the ICIJ keeps setting this trend. They go into a particular type, you know, compliance area of finance and they shine a light. What actually could happen is that institutions involved can take that tech because clearly, the big finance companies have a brilliant array of technologists working for them, so they can take those techniques and simply in-house them so they didn't have to have the journalists, kind of doing it after the fact and causing embarrassment. So if any of you folks are out there in finance and you want to do this stuff, look at what the ICIJ are doing and copy it in-house and it will save you a lot of trouble.

Key takeaway no. 3

Graphs are superior for forensic analytics and for compliance and provenance.

Graph theory and graph data science

Nicki Watt: Excellent. Great. When I was looking at those articles it did mention graph data science in there. So there's graph theory and actually, I think in your book there's a whole chapter on predictive analysis with graph theory.

Jim Webber: Yes.

Nicki Watt: How important is it for people to really understand the fundamentals of graph theory and data science in order to actually gain insight into some of these things? And maybe you can talk a little bit about what graph data science actually is, because I get some questions a few times from clients where it's like, "Well, I'm already doing data science, so how is this different? How is graph data science going to help me?"

Jim Webber: There's quite a lot to unpack there. At the most normal level, you don't need to understand graph theory to take advantage of graph databases because they look just like normal domain models that you're persisting. In fact, the origins of Neo4j were as my boss, Emil, said. He had this idea that it was going to be a persistence mechanism for your Java domain model. It's kind of like the SmallTalk runtime. So if you're a normal software engineer, I beg to differ but, you know, we're not meant to be having...

Nicki Watt: It was a great language. It just didn't make it.

Jim Webber: I came to Neo4j not as a graph theoretician or a data scientist or anything that grand but just as a software nerd. I just felt that the way that I perceived a database happened to be how graphs do work, so I have stuff that's connected to other stuff, you know. Some of it's densely connected and some of it's sparsely connected and the relationships go in both directions and they have names. It's kind of like the ERD that we draw if we were going to use relational, right? But then you didn't have to go into the relational model. You were left at the ERD level, which is a lovely graph. So you don't have to care about this.

But then, once I got into graphs, you know, I kind of found that was the gateway drug. I was like, "Ooh, there's like 300 years of like research here called 'graph theory.'" Everyone kind of knows the terms. It's kind of amazing, right? It's like a really active part of mathematics, has been for years, and I think it forms one pillar of, if you like, the data science part of graph data science, and it's an analytic tradition that comes from maths.

So in the book, we wrote about link prediction. The easiest one, again, I'll fall back to a social graph because it's the one we all know. Like if I'm your friend, and you're a friend of Ross, then at some point I'm going to be a friend of Ross because you've effectively transitively expressed trust or good judgment. Or in this particular case, after a crowd judgment because Ross and I are both cowboys. So what graph theory will ask to do with this notion of triadic closure is predict where relationships will appear in the graph or should appear. And equally, where they shouldn't appear.

So if you hate me and you hate Ross, then it makes sense for Ross and I to become friends so that we could double hate you back, right? So there's all this kind of richness in graph theory, and we could do a bunch of other algorithms. We could find cliques. You could tell me the hierarchical chart, your organization chart of OpenCredo, which I'm sure is a perfect tree. Then I could take your email server and analyze it, and I would find the graph in that tree because it will be because you communicate with each other across layers and so on.

But then I could find things like the cliques in that. So let me see who likes working together. Who is the most important? Obviously, I think it should be the CTO that's the most important, but it may well be that there's some other member of staff on your team that has incredibly high centrality or incredibly high page rank, right, so that loads of information flows through them and you hadn't realized it because the org chart doesn't make it apparent.

Discover how graph databases can help you manage and query highly connected data.This second edition includes new code samples and diagrams, using the latest Neo4j syntax, as well as information on new functionality. Learn how different organizations are using graph databases to outperform their competitors. With this book’s data modeling, query, and code examples, you’ll quickly be able to implement your own solution.

So we've got all of these analytical tools that the mathematical folks have already figured out to figure out, you know, popular nodes, to figure out neighborhoods in our networks and so on. And what's nice about modern graph databases, is you don't need to understand the internals of those. You can just say, "Oy, database, tell me who's popular or tell me which node is popular. Oy, database, tell me which nodes are similar. Oy, database, tell me about the cliques in this graph, the neighborhoods in this graph," that kind of thing. So that comes for free now. I mean, we have processing time, but that's the analytical tradition. I was a huge fan of that because, I've got to tell you, it blew me away, right? When I discovered this stuff it was like magic to me. I guess for many software people maths still seem magical.

Then more recently, there's this kind of whippersnappers coming out of the data science and machine learning community and they're like, "Move over, Granddad. We've got new stuff."

What they're doing is they're saying, "Look, we've got this graph and the graph is wonderful," and often what we want to do is feature engineering over data to be able to build good models. But what the graph provides is more features. For example, in a document database, if we represented you and I as documents, we may well be able to extract features, you know, like age, profession, you know, place of birth, where you live, kind of stuff, right? And you might be able to build a model that predicts something useful.

But also imagine now that you had our position in the graph. All right? So now you can start to reason about my connections, about my centrality, about my page rank. All of these things can become additional features. And what the graph data science folks are doing, in a nutshell, is trying to figure out how to take those really useful graph features and extract them in a way that preserves the properties of the graph but still feed them into their machine learning pipeline. So it turns out you can mix the analytical tradition and the kind of ML tradition if such a thing can be said, so I'll be able to take things like, "Hey, I'm really interested not just in Nicki, but I'm interested also about her page rank and her cohorts' page rank or their centrality or their other clique that they're in, the neighborhood that they're in." And those will become useful features that end up going into my ML pipeline in compulsive events that I'm going to process.

In fact, the most kind of leading-edge stuff that we do now are things like node embeddings where we'll take a whole node and we'll preserve that node's locality as a feature as we embed it into a vector for subsequent machine learning. I think you've got these two kind of complementary things at play now, the kind of old school graph theory analytics. I mean "old school" in an irreverent way, not in a kind of disparaging way. You've got this new cool stuff around ML and it turns out they fit together.

And look, we're not, like, the leaders in this. We're just fast followers. The leaders in this are the likes of Google, the University of Edinburgh, the kind of big brains in this area. They're leading the charge with graph ML, and we're following as fast as we can in trying to provide a kind of packaged version of that technology for normal folks like us.

Nicki Watt: Great. I think one of the things that we've also found is that sometimes you have things like NLP and those types of things that precede the graph. You found, for example, if you've got unstructured data, you can run NLP over that, do entity recognition, and then actually put that into the graph and then do the analysis from there. So, yeah, as you say, you can do it sort of afterward, during and before as well.

Jim Webber: Yes. As I understand it, that's how things like Amazon Alexa and stuff work. They have exactly that kind of pattern. So even the commodity stuff that we have around our houses is at some level graph powered in that way.

Nicki Watt: Yes. We've got these graphs, and we worked actually on a few projects, and it's always important to actually pay attention to the model in the graph, because whilst you've got all these things connected to each other, you've got to be able to actually programmatically query it and do something with it.

Key takeaway no. 4

Graph theory and GDS are complementary techniques. You don't need to understand the theory to use graphs.

Data modelling: common pitfalls

Nicki Watt: So what kind of pitfalls do you see people making when they're getting started with modeling graphs and things like that?

Jim Webber: Oh, Nicki, that's a really awful thing to ask me because you know I've messed this up. I'm good at telling you the pitfalls.

In graphs we have these idioms like what you draw is what you store. It's a really nice idiom because it means if you can draw a domain model on a whiteboard then that's the thing that you're going to persist in your graph database. So there's a real small semantic gap between your business stakeholders and the kind of bits and bytes on disk, which is overwhelmingly a wonderful thing. If you compare that to relational where you draw the domain model, the business stakeholder buys in, great. Then you've got this, like, cathedral, this wonderous thing in Oracle, for example, and you need to be a real expert to be able to translate between the two because the stakeholder looks at your Oracle model and they're like, "Er."

So one of the nice things about graphs is what you draw is what you store, and it's great until it isn't. And I'll tell you why. English. Now, I happen to be English and I'm functionally modeling in English and that means that sometimes I'm sloppy. Actually, we did honestly write this down in the "Graph Databases" book. But we're working on an email forensic system early on in Neo4j so when a customer wants to understand who's-emailing-who looking for potential things like people leaking secrets, which you can do with graph analysis. It was clearly a very graph-y problem.

So we did our detailed modeling and we applied the idiom "what you draw is what you store." So Ann emailed Dan. So a node representing Ann, an arrow representing "emailed" and then a node representing Dan. That seems fine. We also double-checked it so we also have this idea in graph modeling that if you can read a sentence and it sounds correct, then your model's probably correct.

So let me try and read that. Ann emailed Dan. That's perfect English, right? Obviously. So clearly the model is correct. What I've drawn is what I've stored. It's now on disk. Right, let's write some queries. Right then. So who was involved in an email that mentioned "fraud"? Obviously, fraudsters will write "fraud" in the title of their email. That query doesn't work. And why doesn't it work? Oh, it's because of English. Obviously, I'm not going to blame myself. It's the language that's the problem, right? It's so easy in English to say, "Ann emailed Dan." And it's such a shorthand but it's not a true reflection of what's happened. So we missed some of the domain knowledge there.

Actually, what really happened was that Ann sent an email. An email is an entity so Ann sent that email. And then that email was to Dan. It may have been CC'ed Bill. Right? But we missed out on a key entity there because our use of English was sloppy. "Ann emailed Dan" sounded right so we used it. And then when we put it out in long-form, "Ann sent an email to Dan and CC'ed Bill," is what we should have said. So it's really easy to kind of convince yourself that the model is correct by reading it and it sounding sensible when actually you've missed out some information from your model. You got a loss-y model.

I think that's one of the things that if you're new to graph databases or new to graph modeling, that's the thing that catches you out because while there are many wonderful things about graph modeling, it's simple, it's pleasant, it's humane, it's expressive, and you've done this, right, it is a joyous thing and I'm always reluctant to go back to relational modeling after doing this. Relational modeling has rails, right? You're on your rails. You create your ERD and then you've got a plethora of normal forms that you would choose to normalize to.

We don't have that in the graph world. So you have to be kind of on your toes a little bit so that you can realize that "Ann emailed Dan" is actually a terrible graph and you've missed out entities and therefore you've got a loss-y model. So beware of that when you start your journey into graphs. I think that English or whatever sloppy natural language you're speaking can catch you out and just double check that entities haven't fallen through the gates.

We categorize this as "I verbed a noun." Because instead of having an email, I say, "Oh, well, Ann emailed." And so look at that verbing of nouns because you may be missing out on important entities in your model. In fact, we do cover that in the book and if you want to double down on my embarrassment as a terrible developer, then you can read that in the "Graph Databases" book as well. It's a warts-and-all story.

Key takeaway no. 5

Look at that verbing of nouns because you may be missing out on important entities in your model.

Changes in the book since 2015

Nicki Watt: So for people that are starting out, the software world is changing so fast, it's so hard to keep up. Your book was released in 2015, which was about sort of four to five years ago, and whilst I do think graphs are timeless, writing is not. What has changed since then? I mean, for people that are maybe picking up the book now, are there things that are still valid? Are there things that they need to be aware of in terms of stuff that's moved on?

Jim Webber: Yes. I think you answered this question better than I could. I think, you know, the graph stuff is timeless. So the stuff in the book that's about modeling and so on, that's still perfectly valid. There's other stuff in there that may be kind of good for introductory. I think if you're reading the book today you should kind of read with a slight filter on some of the technical stuff that has changed.

In the 2015 edition of "Graph Databases" we talk about how you might extend the database using these things called "unmanaged extensions." That was because in that version of the database we had a REST API that you could put plugins in and make it richer, make it more domain-specific. While all those things still exist in Neo4j today, that's actually undergone quite a radical change. And now Neo4j has a binary RPC protocol called "Bolt" and the Cypher query language itself can host procedures, which you can write. So almost we've taken the model and flipped it, whereas it used to expose your custom code as REST endpoints effectively as URIs that you can fire at HTTPS, now it's inside the database and you call those custom procedures via Cypher.

Cypher itself has matured. We wrote this against Cypher for Neo4j 2.0 and Cypher has just gotten better. As we've got more experience with Cypher, there's a big team at Neo4j, about a third of the engineering team one way or the other are involved with the implementation and design of Cypher now. We've knocked off some rough edges. It's got more capacity, can do more things. When you see the Cypher examples in the book and then you look at, for example, other better books, I'm sure, or the Neo4j documentation, you'll spot better ways of doing it or you'll spot, "Hey, this didn't exist when 'Graph Databases' was written. This is new and interesting." So look at that. I think those are the things you should look at kind of as a developer.

As an ops person, we changed the clustering in Neo4j. So when we wrote the book, Neo4j had a higher availability clustering but you were invited to kind of supply your own load balancing and stuff, which was fine for where we were. But of course in 2020 that sounds a bit horrible, doesn't it, even saying those words. So we changed the clustering model so that the database takes care of load balancing for you. And as a developer now, you simply take a driver in your chosen language, in Python or Java or one of the .NET languages or what have you, and then you see the database in an idiomatic way for yourself. You don't have to worry about “this is a cluster” and I've got three machines in New Zealand and two in London and three in New York. All that stuff's taken care of for you so there's a separation of concerns that didn't exist quite so well in the previous Neo4j. So in a way, it's simpler but you're going to have to mix in your reading of the book with some reading, like, from the Neo4j website or something like that to get a more accurate picture.

We had a pre-meeting to talk about what we wanted to talk about, like a meta-meeting, and you did tell me that it needed to be updated. I've taken it up on board, talked to my folks at Neo4j and we're kicking around the idea of a version three of this now because I think you're right. You've embarrassed me into writing again. I hadn't thought that it was 2015 when this came out. In my mind it was like we did that book like a year or two ago and it's still fine, and then we have this conversation and it's like, oh, a lot's moved on actually. It's time for a refresh.

Nicki Watt: Excellent. Excellent. In terms of things you might cover in there, you spoke about Cypher but now there's also GQL out there. So is Cypher still around or is it dying or is GQL replacing it? What's going on there?

Jim Webber: So Cypher's still very active, right? There's still engineers at Neo4j working on it. And actually outside of Neo4j Open Cypher is taken up by other databases and platforms.

GQL is Graph Query Language and it's the graph analog of SQL. And it's being standardized by the same ISO committee that standardized SQL, which is nice. In fact, that committee, if my memory serves, they're called the Database Query Languages, plural, Committee, which is funny because in history they've only ever done one language and that was SQL. So now they've finally earned their plural by looking at another language. And of course there's a good reason for that. You know, we're old enough to have seen SQL evolve, right, and SQL has subsumed every other competing tech that's come along. XML databases, into SQL. You know, kind of object databases, into SQL. They've managed to kind of...SQL's been kind of like the blob that's absorbed everything else.

ISO have decided that graphs are distinct enough to warrant their own query language so they're building this thing called GQL, which I think is a helpfully unimaginative name. Right? Graph Query Language, fine, it's a query language for graphs. The main input to that is SQL. I mean GQL, it's Cypher now. I have SQL on the brain now. It's Cypher. I think the reason for that is, of all the people out there, including these huge database companies, Oracle, Amazon, what have you, we have the most experience, the most deployed footprint using Cypher. In fact, loads of people like Cypher. So it's one of the big driving forces in the design of GQL. In fact you can see the way GQL's going, it's a declarative pattern-matching language, which sounds familiar because Cypher was the original declarative pattern-matching language for graphs.

I think over the long term, over the medium term, you know, GQL should be where it's at in the same way that SQL's where it's at for relational. A lot of that, the brain trust for that is coming from Cypher. And if you're on Cypher today, if you've read the book and you've done some Cypher queries, that learning's going to be valid and you're going to have a really easy time moving onto GQL. My fervent hope is that GQL is just really good and everyone adopts it because then I can move from graph database to graph database, you know, and none of my knowledge is lost.

At the moment for example, 90% of what we see out there is Cypher, but then if you move into a new gig on a graph database that only supports something legacy like Gremlin, then it's like, "Oh, man, I have to relearn all this stuff." I think hopefully GQL will level that playing field in the same way that SQL did for relational. I think that's where it's heading.

Discover how graph databases can help you manage and query highly connected data.This second edition includes new code samples and diagrams, using the latest Neo4j syntax, as well as information on new functionality. Learn how different organizations are using graph databases to outperform their competitors. With this book’s data modeling, query, and code examples, you’ll quickly be able to implement your own solution.

I understand there's a draft of the spec due out imminently. I don't know what "imminent" means for standards bodies so I'm guessing, you know, in the next year. We'll have to see really how GQL looks for actual practitioners and get some feedback from normal data practitioners about where it's working and where some rough edges need to be hewn off.

Key takeaway no. 6

If you pick up the book, the overall advice is good, but the way you package and deploy has changed a lot and the same is true for Cypher transforming into GQL.

Chief scientist: computer science challenges

Nicki Watt: I have so many questions but we can't go on forever so let me finish with this. So you are the chief, you are a chief scientist. What does that mean in terms of what you are actually working on at the moment? What is the future of graphs and what are some of the computer science angles that you're looking at?

Jim Webber: Oh, boy. I mean, it feels like there's infinite computer science to be done in databases. In general, right, it's a very CS discipline. The stuff that I'm particularly interested in is fault tolerance and scale for graphs. The model makes it interesting, right, so you could imagine a distributed graph database. There are some of those out there where you and I are nodes on different servers and there's a relationship between us and the hardest thing now, which no consistency models directly address is if Jim follows Nicki, then Nicki has to believe she's followed by Jim Webber. Keeping the reciprocity, so making those things reciprocally consistent is a real challenge and none of the distributed databases out there do that today. They just take their chances, which is a worry because it means, you know, there's a potential for that data to become corrupted.

I've been working with some folks at the University of Newcastle up in the Northeast who have been building consistency models that not just maintain the basic ACID semantics but also maintain this reciprocal nature so that if you delete that relationship, both sides agree that the relationship is deleted and you don't get a non-deterministic query. If you query this side, you see a follower. If you query this side, no follower. I mean, that's just nuts, right? Because then you'll take that information and you'll use it to write other stuff into the graph which will spread that corruption.

So I've been working on that. It sounds really dull to most normal humans but I'm a consistency model nerd so this stuff's really good for me. But more broadly, I mean, across Neo4j I think there's a lot of hard work going on in language design for GQL, and consequently things like query planning and optimization, which is a really hardcore piece of computer science. There's a bunch of stuff going on down in the weeds, low-level IO bit-twiddling and understand how to get the most from modern computers and it's just like a whole range of kind of very nerdy computer science stuff right the way up to we have people who are doing R&D on how do you visualize this stuff as well.

You know, you and I are quite used to ball, stick, ball. There are other folks for whom that's a completely meaningless visualization, particularly at scale. Like, a billion ball, stick, balls is not terribly useful, so how do I visualize that stuff? There are incredible human-computer interaction challenges happening up there. So sport for choice, really. There's a lot of CS going on and no perceivable end to it but it's all tremendously exciting stuff.

Nicki Watt: The third edition maybe?

Jim Webber: Yes, maybe. Maybe. I think that was more of a kind of, "Hey, come and work at Neo4j," pitch than it was a pitch for a third edition but, yes, I think some of that we have to cover, right, because it really is now what the kind of contemporary graph database community does.

Nicki Watt: Yeah. Fantastic, Jim, Well, thanks so much. I think it's been awesome as always to chat with you and, yeah, been great.

Jim Webber: It's been lovely chatting with you again and stay safe, and I'll catch you on the other side of this zombie pandemic in person.

Nicki Watt: Indeed, indeed. Thanks, Jim Webber.

Jim Webber: Cheers.

Preben Thoro: Thank you. Thank you so much, both of you.

About the authors

Dr. Jim Webber is Neo4j’s Chief Scientist and Visiting Professor at Newcastle University. At Neo4j, Jim works on fault-tolerant graph databases and co-wrote Graph Databases (1st and 2nd editions, O’Reilly) and Graph Databases for Dummies (Wiley).

Nicki Watt is the Chief Technology Officer for OpenCredo responsible for the overall direction and leadership of technical engagements. A techie at heart, her core expertise lies in problem-solving and enabling pragmatic, practical solutions. Over the years at OpenCredo Nicki has worn many hats which has included the development, delivery, and leading of large-scale platform and application development projects involving Cloud, DevOps, and Data. Nicki is also co-author of the book Neo4J in Action.